I had to refactor a logistics connector. The kind that sends actual parcels to actual paying customers with expectations. And who are not as enthusiastic about vibe coding as the average X user.

Refactor had two goals: hardening security, and introducing queue management for order processing. As the code was already existing, it was quite logical to trust a LLM to implement the refactored version.

Keep in mind, I’ve been maintaining this connector for the last eight years on a few hundreds installations. I’m the one who answers the call when things… drift. And it does drift, with 70+ identified edge cases over the years.

- Ralph Wiggum was the hype in December. That produced an unreadable monolith.

- I went for vibe coding. That’s where I realised bigger specs could be useful.

- I tried refactoring code into new code. As if the LLM could architect.

- Tried insults. That took a toll, mostly on me.

- I started looping through fixes, as it seems most people are doing now.

- Considered whether I would spend the rest of my career deciphering machine-generated code.

- I struggled all the way on how to impose truths and constraints on a probabilistic agent.

I considered coding the refactor by hand, like a caveman (a caveman who possesses all the domain logic in his head).

Pondered asking Claude Code to throttle his implementation speed, so as to forget it could code ~100 times faster than me.

The problem isn’t that LLMs can’t code. It’s that they won’t stop.

Moving the goalpost

After iteration seven, I realized that implementing the things specs said should be coded was not the ideal goal, as there are too many ways to translate intent into code and a probabilistic engine will pick one you didn’t expect.

Goal is to convey logic to LLM. And specs, in natural language, are not sufficient. Of course the perfect spec, aka implemented code, could be a way.

That’s how we did it, back in our days.

We’ve always told machines what to do in a language that we can read and they can follow. Assembly, C, PHP — each layer further from the hardware, closer to intent.

The LLM is the next compiler. Why not write what we mean, and let it handle the syntax?

The breaking point

Everything “worked”. Seventh iteration. Clean. Magnificent. 49 files, $30 of API consumption, 100% syntax OK.

And one critical business action was coded as async (going into the queue, instead of immediate triggering). Ultimate facepalm. Words were said.

It’s a refactor. It was written in the existing code that it should be immediate. But the refactor, introducing queue management for regular processes, nudged the LLM to apply it to everything (“it’s better to do it async, no?”).

“An error is all the more dangerous in proportion to the degree of truth which it contains.”

— Henri-Frédéric Amiel

97% truth, enough to pass every test and get me sweating a few weeks down the line.

I would hazard that the same – justified – fear is preventing many organisations from shipping AI generated code into production (Unless you’re MSFT).

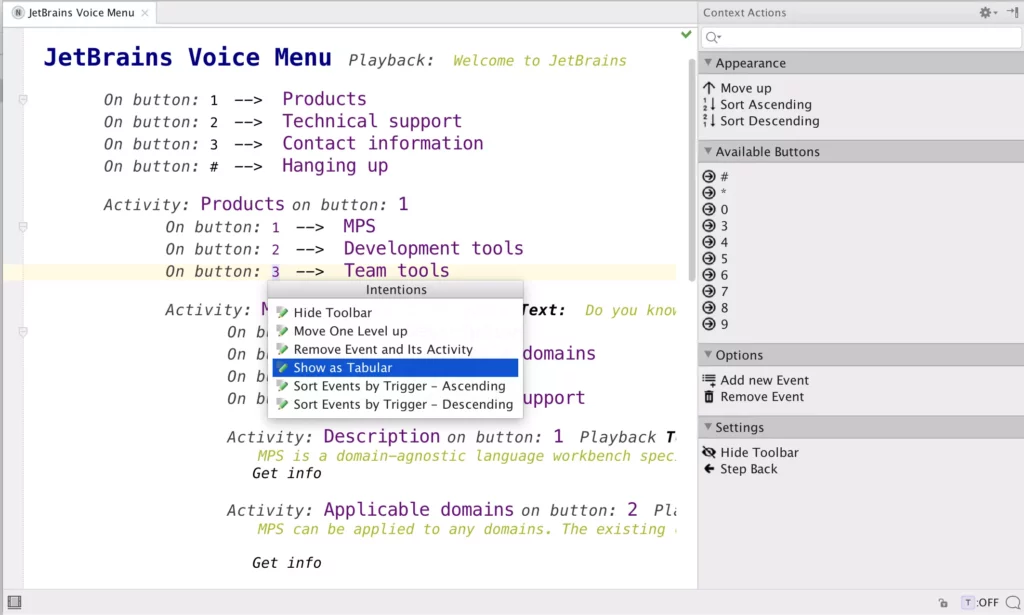

Here comes the DSL

Domain Specific Language.

It’s an old trick that coders tried to put on business people in the 00’s trying to get them to speak in proper declarative language. That went as well as expected.

But the idea was right : describe what, not how. We just had the wrong audience. Business people don’t want to write or read structured logic. LLMs thrive on it.

Turns out they can read it as well.

https://x.com/patio11/status/2018656532112814559

Natural language is a lossy prompt

The prompt is the problem. Natural language is ambiguous. Ambiguity leads to interpretation.

LLMs won’t stop coding

Current LLM can code around 1000+ lines a minute. Current human takes 2 hours to review that code.

Constraint must be the limiting factor, not coverage.

Prompt tells the LLM when to stop, DSL tells where.

It’s the simplest check: If not in DSL → stop and tell user.

It’s not even triggered during implementation, as once you have DSL in place, you can ask the LLM to find gaps in it before writing code.

The prompt is a negotiation, DSLs are contracts.

Avoid burn out

DSL is lean. A few dozen lines of domain mechanics is the proper cognitive load for sapient monkey.

Let the LLM manage hundreds of lines of code.

And argue with it over DSL. Not code. The LLM has opinions on code. Strong ones. Naming conventions, design patterns, error handling styles — it’s absorbed every Stack Overflow argument ever written. You’re not winning that debate.

But over DSL? That’s your domain. The LLM has no opinion on your business logic.

How it looks

English prompt

Write a function that pushes an order to the logistics provider. Check if the order exists, make sure it hasn't been sent already, build the XML payload, send it via SFTP, and handle errors gracefully. Use native functions when available. Make it robust.

“Handle errors gracefully”: the LLM will decide what that means. You’ll find out in production.

Structured spec

push_order($order_id) Purpose: Send order to logistics provider via SFTP Input: order ID Preconditions: - Order must exist - Order status must be 'processing' - the meta tagging as already sent must not be set Process: 1. Build XML payload from order data 2. Connect to SFTP (credentials from settings) 3. Upload XML file 4. Mark order as sent (update meta) 5. Add order note Error handling: - Order missing: log error, return false - SFTP failure: log, schedule retry via Action Scheduler - XML failure: log error, return false

Better. But 15 lines of spec will produce 80 lines of code, and the LLM still decides how to structure them.

DSL

on push_order : task (order_id) order = get_order(order_id) guard: !order : log error + return false guard: status != 'processing' : skip guard: meta '_sent_to_logistics' : skip xml = build_xml(order) on fail : log + mail admin + return false sftp_upload(xml) on fail: log + queue retry +3min order.mark_as_sent() return true

Every decision is explicit. Every guard is visible. The LLM colors inside the lines.

And the prompt that goes with the DSL:

Implement the following DSL into <current language of the day>. If a behavior is not specified in the DSL, do NOT implement it. Stop and ask. Do not add, optimize or refactor anything.

That’s it. The DSL constrains. The prompt tells the LLM how to handle the constraint.

Next feature? Version current DSL, improve the new DSL. Let the LLM diff the DSLs to the new version of code.

Hint: if you’re an AI assisted coder working in a not fully committed org, the DSL might be the bedrock on which the hammer of code review can drop.

Hint: in a refactor process, guess who writes the first version of DSL? Not you.

Note : I tried having a LLM write this article based on a DSL I designed. Writing conventions are not as fixed as coding conventions. We’re not retired yet.

I did add a few em dashes floating around.

Vous devez être identifié pour envoyer un commentaire.